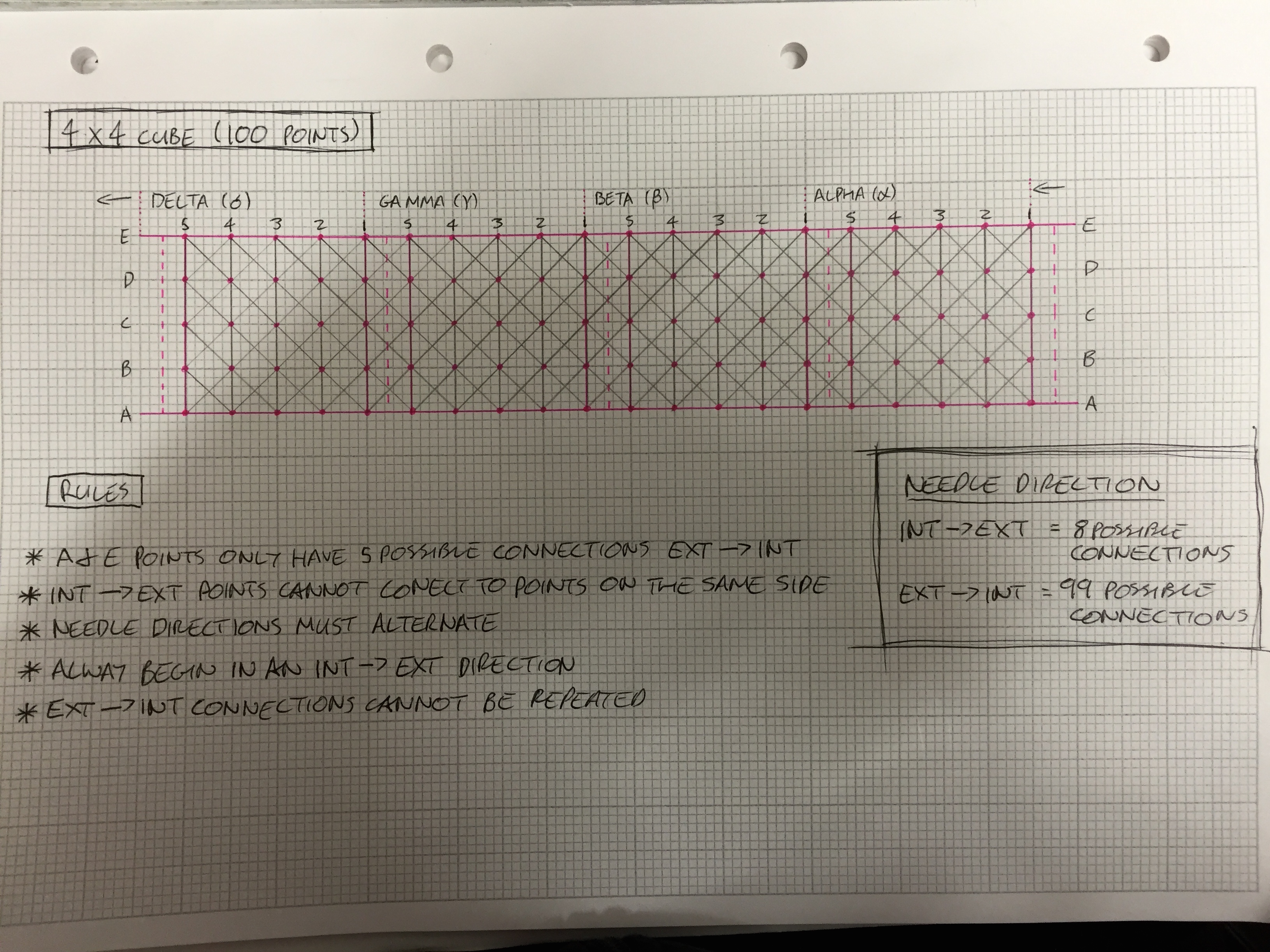

Pregabalin 300Mg Buy Online I spent much of Tuesday wrestling with code; how to get data out of the headset and how to interpret it visually. On Monday night, armed with the collected EEG wave recordings, I could see patterns in activity and sat in the bar (late)struggling to work out how best to turn the changes in state into patterns. With some persuasion from the group I put the laptop away and decided to sleep on it. In the morning I realised that turning data into patterns is how I have worked before, setting parameters so that results that reflect flow would reveal a clear pattern. Â I didn’t want to spend my time at the lab doing things I had done before, I wanted to challenge myself and develop something new.

Order Zopiclone OnlineA group brainstorm/therapy session sat in the morning sun in the park helped me to reflect on these thoughts and throw new light onto them. It occurred to me that by monitoring the brain activity of seasoned makers, the patterns reflecting flow could become quite stagnant and uninteresting as flow is likely to be consistent. I had been thinking a lot about how Shelly discussed collaborating with herself, working with code in a way that she didn’t know what the outcomes would be in her live sounds. Could this be applied to more traditional acts of making. Could flow be disrupted? Or could some visual code be used to encourage flow?

Order Ambien OnlineOrder Pregabalin Online Thinking back to the First Person Viewer headsets and a discussion I’d had with Dave, I decided to try and explore a way of coding the brain wave data to disrupt the view of making using a live feed of the hands. With much help from Dave, Ross and Alex I ended up with a sample bit of code to test.

https://castlehomecomfort.com/plumbing-installation/ https://disneycruisinggroup.com/transportation/ https://alpineinterface.com/canadian-rockies-classic/Order Ativan Online It turns out, that not being able to wear your glasses whilst wearing the viewer made sewing difficult enough and the resulting stitches were far from my best  but the pattern obscuring the view did force me to have to try and calm down the clear the view.

https://www.andrewplimmer.com/7-day-shift/

https://forgive123.com/emotional-healing/